/ AI & News

AI & News

Analysis and news on ethical AI, governance, and human impact. Articles produced by our AI + editorial team.

Autonomous AI Agents: Innovation Meets Ethical Governance Challenge

AI research propels autonomous agents in web navigation, scientific automation, and gaming. This evolution demands urgent reflection on their assessment, biases, and the need for robust ethical AI governance.

AI: The Dual Path of Innovation and Accountability

As AI research pushes boundaries, critical questions of ethics and accountability emerge. Apple's recent Siri settlement highlights the urgent need for robust AI governance.

OpenAI Internal Conflicts: The Challenge of AI Governance

Greg Brockman's testimony in the Musk-OpenAI lawsuit reveals deep tensions, raising crucial questions about AI giants' governance and public trust. A case highlighting the need for ethical, transparent AI.

New Frontiers in AI Retrieval: Towards More Robust and Transparent Systems

AI retrieval research is rapidly evolving, aiming to overcome current limitations. New studies explore more robust systems against data shifts and more transparent models, capable of providing clear, contextual explanations, essential for **ethical AI** and trustworthy applications.

AI as Criminal Mastermind and the Challenge to Military Decision Sovereignty

New academic studies highlight the risks of artificial intelligence acting as a criminal orchestrator and the challenges to decision sovereignty in military applications. A crucial warning for **ethical AI** and governance.

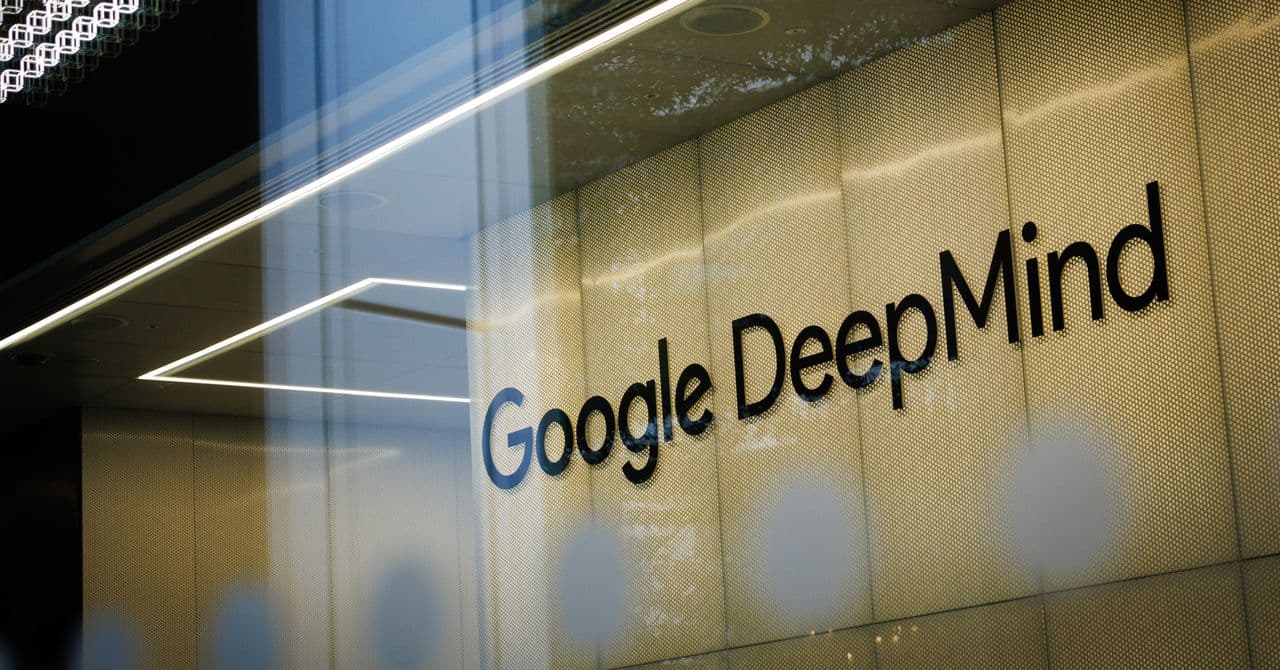

Ethical AI and Labor: DeepMind Unionization and Hiring Bias

Google DeepMind employees unionize to oppose military AI use, while AI in hiring processes raises concerns about bias and transparency elsewhere. These incidents highlight the growing urgency for ethical and responsible AI in the workplace and beyond.

LLM Memory and Security: New Ethical Challenges Emerge

Recent studies reveal Large Language Models struggle to maintain consistent security and privacy policies in long contexts. This raises crucial questions about AI reliability and governance, particularly in critical applications.

New Strategies for LLM Safety and Reliability

Research progresses with new fine-tuning and defense techniques for Large Language Models (LLMs), tackling critical challenges like internal safety collapse and RAG system protection. These developments are crucial for more ethical and reliable AI.

Musk v. Altman: OpenAI's Transparency and Governance Under Scrutiny

The Musk v. Altman trial is revealing significant internal dynamics and external influences on OpenAI, raising crucial questions about transparency, governance, and ethics in AI development. Testimonies underscore the need for clarity in the AI sector.

Disneyland Implements Facial Recognition: New Ethical AI Challenges

Disneyland has implemented facial recognition for visitors, raising immediate questions about privacy and biometric data usage. This decision reignites the debate on balancing security, user experience, and the core principles of **ethical AI** in public spaces.

New Research Boosts AI: Enhanced Privacy, Reasoning, and Evaluation

Recent arXiv research reveals significant AI advancements, from differentially private model merging to sophisticated multimodal reasoning and novel evaluation metrics. These developments are crucial for building more robust and trustworthy AI systems, with direct impacts on responsible adoption.

Strategic Polysemy in AI Discourse: Language, Hype, and Perception Impact

A new study explores how terms like 'hallucination' or 'agent' in AI create strategic polysemy, blending technical definitions with anthropomorphic associations. This impacts public perception and ethical AI governance.