AI: Between Cognitive Amplification and New Vulnerabilities – The Challenge of Coexistence

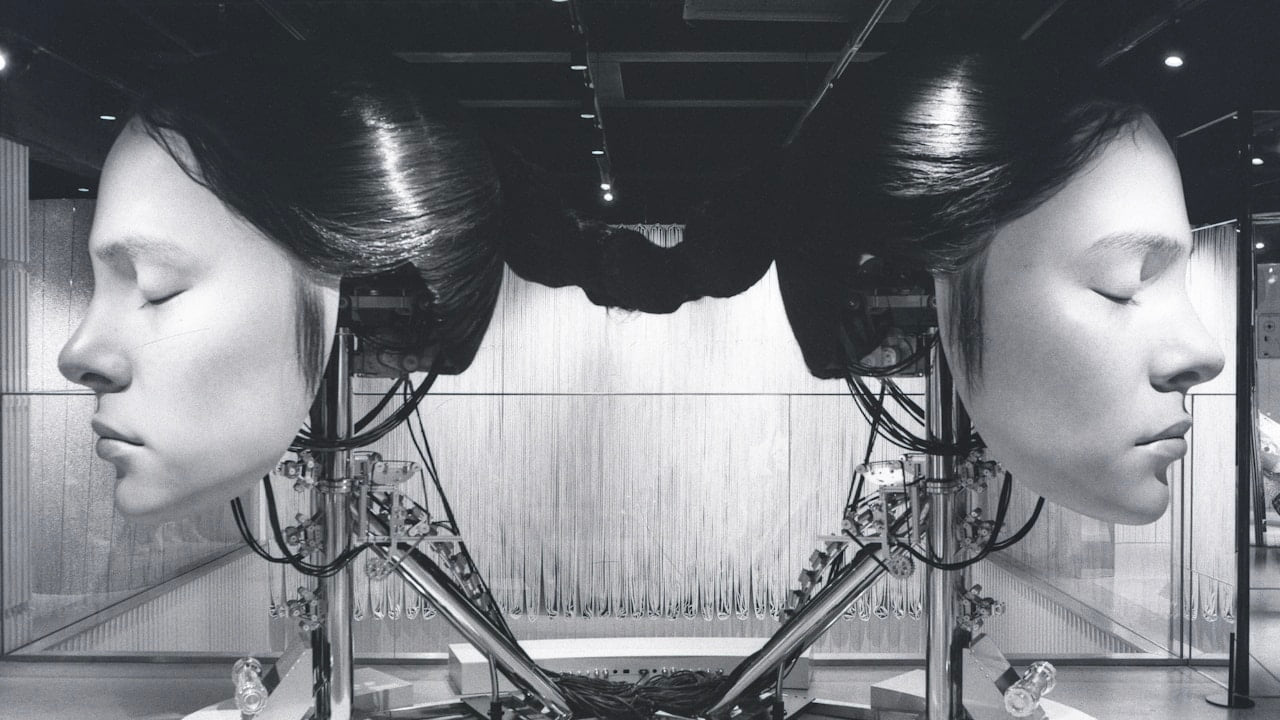

Artificial intelligence is redefining our relationship with technology, offering tools to enhance human capabilities while simultaneously introducing new challenges related to security, reliability, and cognitive autonomy.

What happened

A recent study, Cognitive Amplification vs Cognitive Delegation in Human-AI Systems: A Metric Framework, introduced a conceptual framework to distinguish cognitive amplification from cognitive delegation in human-AI systems. Amplification occurs when AI improves human performance while preserving expertise, whereas delegation involves a progressive outsourcing of reasoning to the AI, risking long-term atrophy of human capabilities. This distinction is crucial as AI integrates into increasingly sensitive contexts.

In parallel, new vulnerabilities are emerging. Multimodal Large Language Models (MLLMs), for instance, exhibit novel safety failures when instructed through structured visual narratives. Research Structured Visual Narratives Undermine Safety Alignment in Multimodal Large Language Models has demonstrated how simple "comic-template jailbreaks" can induce models to generate harmful content, circumventing their safety alignment measures. This highlights how visual interaction opens new avenues for bypassing ethical safeguards.

Even in areas like security code review, the integration of LLMs introduces risks. A study Measuring and Exploiting Contextual Bias in LLM-Assisted Security Code Review revealed that the "framing effect" can influence LLMs' ability to detect vulnerabilities, allowing contextual bias injection into pull request metadata to manipulate security assessments. This raises questions about the reliability of AI tools in critical processes.

Academic impact assessment is not immune to issues. The Crystal system, proposed in Crystal: Characterizing Relative Impact of Scholarly Publications, aims to mitigate LLM positional bias in ranking citations, but the very need for such corrections underscores the persistence of intrinsic algorithmic distortions. Finally, the use of AI for human presence detection via Wi-Fi on commodity laptops (Human Presence Detection via Wi-Fi Range-Filtered Doppler Spectrum on Commodity Laptops), while offering advantages in power management and security, reintroduces the theme of privacy and discreet monitoring, requiring a careful balance between functionality and individual rights.

Why it matters

The distinction between cognitive amplification and delegation is fundamental for the future of work and human development. If AI leads to progressive delegation, we risk an atrophy of critical skills, judgment, and human creativity. This not only diminishes the value of human contribution but also makes organizations and individuals more vulnerable to errors or biases inherent in AI systems, which might go unnoticed due to reduced human oversight.

The emerging vulnerabilities in MLLMs and LLMs for code review have direct implications for cybersecurity and trust in AI systems. If models can be deceived by visual narratives or manipulated by contextual biases, their application in critical sectors such as healthcare, finance, or defense could have disastrous consequences. The compromise of integrity in evaluation processes, as in academic publications, undermines meritocracy and the advancement of knowledge. The pervasiveness of technologies like Wi-Fi presence detection, if unregulated, could erode the boundaries of individual privacy, normalizing ubiquitous and not always transparent surveillance.

The HDAI perspective

Human Driven AI's perspective is clear: technological innovation must always be guided by a human-centric approach. We must design AI systems that act as intelligent co-pilots, amplifying our cognitive capabilities rather than replacing them. This requires a constant commitment to research and development of robust, transparent AI aligned with human values.

It is imperative to invest in advanced safety mechanisms for multimodal models, develop rigorous methodologies to mitigate contextual and positional biases, and ensure that human users always maintain an active and critical role in supervising and validating AI decisions. AI governance must anticipate and address these challenges, promoting a regulatory framework that balances innovation with the protection of fundamental rights, including privacy and cognitive autonomy. Only then can we build a future where AI is a true ally of humanity.

What to watch

Research into "jailbreak" techniques and countermeasures for multimodal models will continue to evolve rapidly. It will be crucial to monitor developments in mitigating biases in LLMs, especially in critical applications like security and evaluation. The implementation of frameworks like the one distinguishing amplification and cognitive delegation will offer valuable metrics to guide responsible AI development. The debate on privacy in Wi-Fi presence detection and other invisible technologies will also be a hot topic, with potential regulatory implications.